广慈简介

更多>

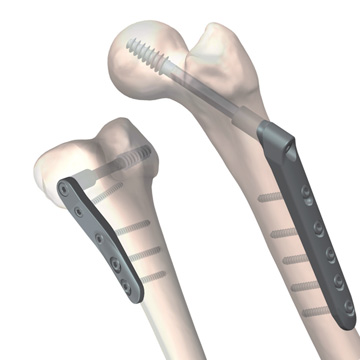

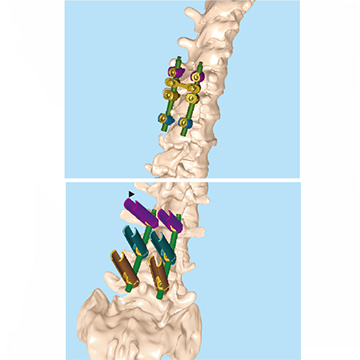

我公司主要生产骨科内固定、外固定产品和口腔种植牙产品,1992年与上海市第六人民医院合作研发骨科外固定支架而创建。公司位于世界最长的杭州湾跨海大桥南岸、长江三角洲经济圈南冀环杭州湾地区上海、杭州、宁波三大都市经济金三角的中心慈溪,地理位置十分优越。公司现有占地面积 ...

浙江广慈向老挝武警总医院进行医疗物资捐献

2016-10-12

日本材料学会会长幸家光雄教授来广慈访问

2015-12-29

我公司与东华大学举行高校实训基地和产学研合作签约仪式

2012-07-30

zj型数控气压止血带完成重新注册工作

2012-03-15

慈溪局沈箭达副局长来我公司实地调研发展现状

2012-02-13